Zero to Agentic Data: A Guide to Snowflake Intelligence

I recently attended the 'Data for Breakfast' series that Snowflake - an enterprise data warehouse platform - is organizing globally this month. The intent was to understand how to best leverage the Agentic AI setup that Snowflake offers out of the box with its proprietary Cortex suite of functionalities. I had some post-reflections on that event with a practical roadmap to scale context-building & agentic capabilities on top of your enterprise data.

(Above: At the Snowflake event)

(Above: At the Snowflake event)

Where most organizations are

A database system has to deal with a multitude of source system data points, spread across the data maturity landscape (bronze -> silver -> gold). In common parlance, BI capabilities are mostly derived on top of the analytical data products, that mirror the source one-to-one and is cleansed and transformed to reflect business context. The traditional way has been to expose these 'gold' layer tables to business consumers and let them (or a centralized data team) build insights on top. The insights generation stage was typically time-consuming, and even then, business users who weren't savvy with building their own data models would be constrained to the metrics in a dashboard.

This is where AI agents promised to step up and change the game for good. However, it was quickly realized that text-to-SQL was never the challenge. For AI Agents to perform reliably on your data - there has to be a solid contextual layer in between the physical data and your agents. Think of it like 'product portfolios' - each data product holds out an introduction card to an agent that would like to understand more about it. Once the semantic layer becomes available and refined - the agents are guaranteed to have a far more accuracy and lower hallucination rates than the ones without the context.

The Snowflake Setup

Being a market leader, and actively competing with Databricks, Snowflake launched a gamut of get-data-ready-for-AI features soon after the transformation wave began to consume enterprises. Besides traditional ML and inferencing capabilities, they have launched Cortex Search (to enable RAG usecases on unstructured data), Cortex Analyst (that helps build semantic context on data points) and Agents, that combine all of the earlier capabilities into one package.

Even before you build an agentic capability, it is critical to get the data in order. What do I mean by that? Agents perform best when they have some kind of logical structure to follow. If your business is into retail, but you happen to have a monolithic database with all your data domains in one single schema - that is poor data organization. A very good way to split data is to use the data mesh approach - where databases are separated by logical boundaries in terms of the domains they serve. In that case, the Sales division would have its own warehouse, the Finance its own, the HR theirs, and so on. Of course, each of these domains would then further branch out into individual datasets ("groups of tables") that address frequent business needs.

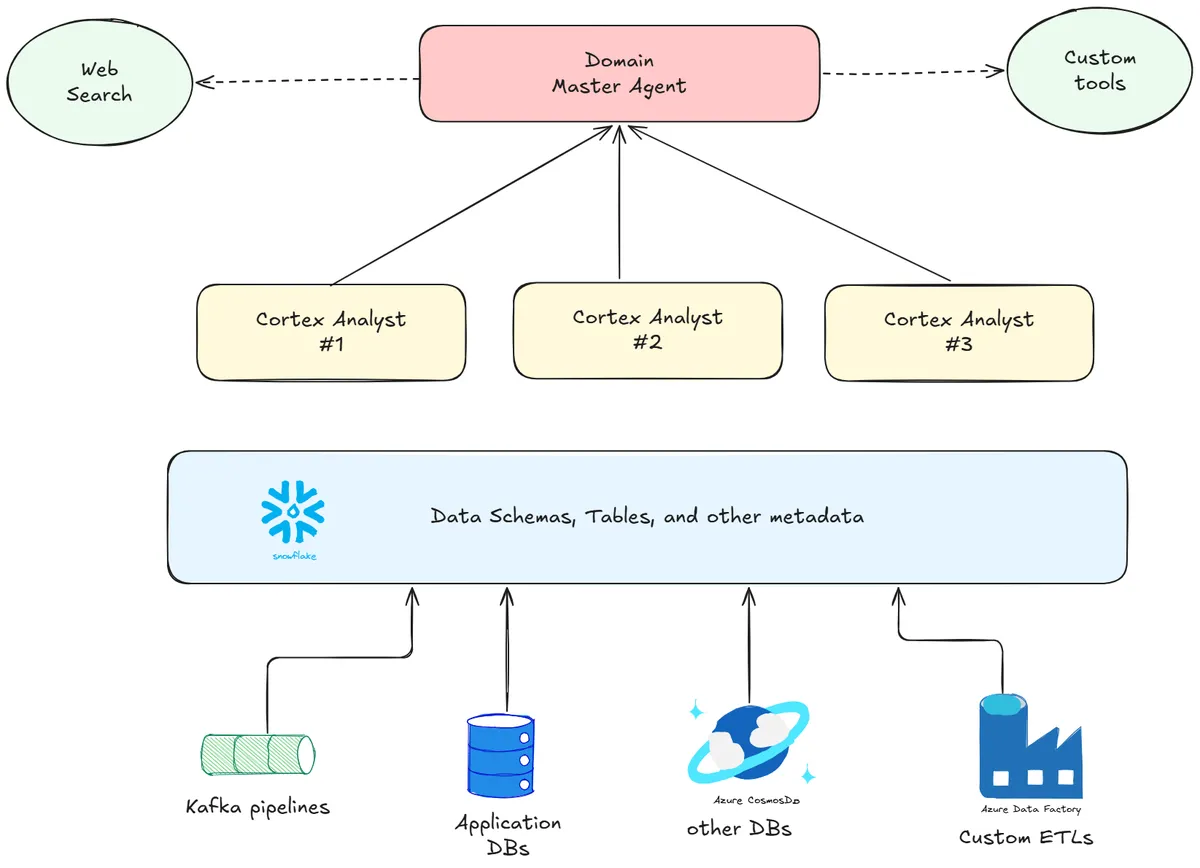

Once a data mesh approach is in place, here is a below structure to start building the agentic layer.

For each dataset - or logical utility in a business data domain - we have a Cortex Analyst evaluating a Semantic View (the context layer). These Cortex Analysts then are fed into the master domain agent, which is further powered by tools like external web searches, or other custom integrations.

Evaluating Snowflake Intelligence on a Niche Data Setup

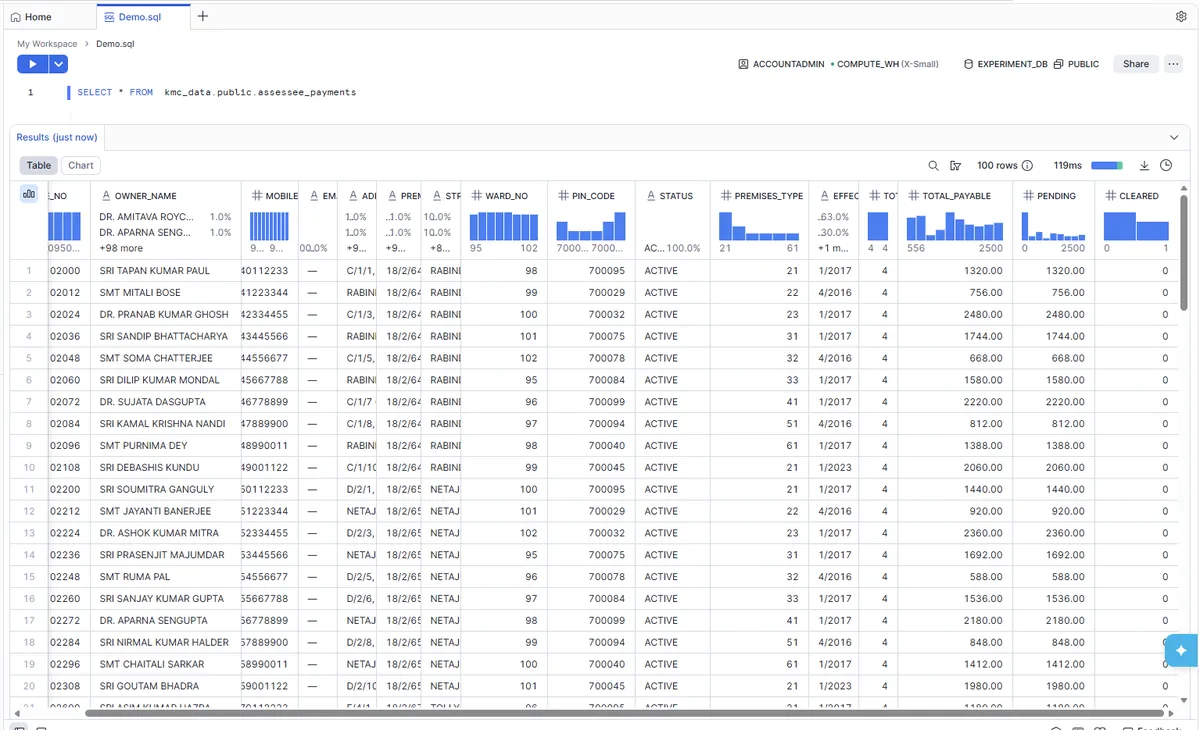

My roots are from Kolkata, an eastern metropolis in India. Sometime back, while paying property tax, I noticed that the data on outstanding tax payments could be a nice use case for the Government to analyze. Using my data as an anchor, I generated some synthetic data to recreate a database of around 100 rows. Note that in India cities, the PIN Code is usually the equivalent of a US ZIP Code, and Wards are divisions in the municipal structures. This is a snip of the synthetic dataset. There are also dimensions around the Premises and PIN Codes available in the database, conspicuously named KMC_Data. I must say I also picked this because tax notices can be usually construed as a rather niche category, with most industries operating outside of GovTech platforms. This would be, therefore, a good trial of Snowflake's AI abilities to infer context from the dataset at hand.

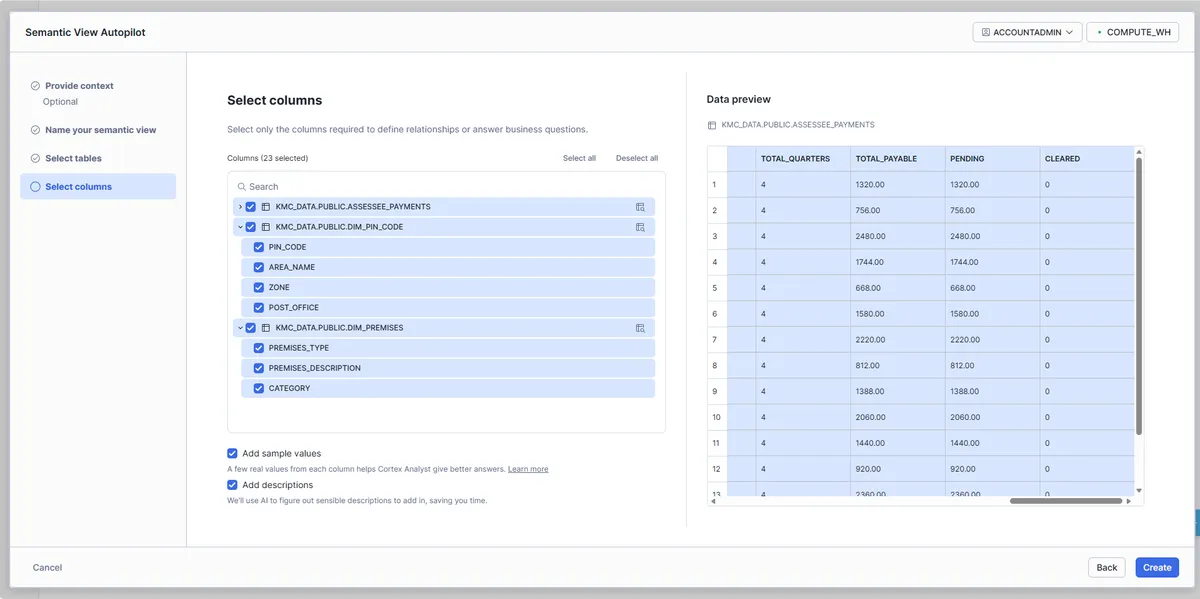

The first step would be to create a Cortex Analyst with minimal effort to get a lift-off into the context-building exercise. Heading over to AI/ML > Cortex Analyst, and create a semantic view. Much like other functionalities in Snowflake, the semantic view is also a custom Snowflake object. Hence, if we were to remove them, one can utilize the DROP SEMANTIC VIEW command.

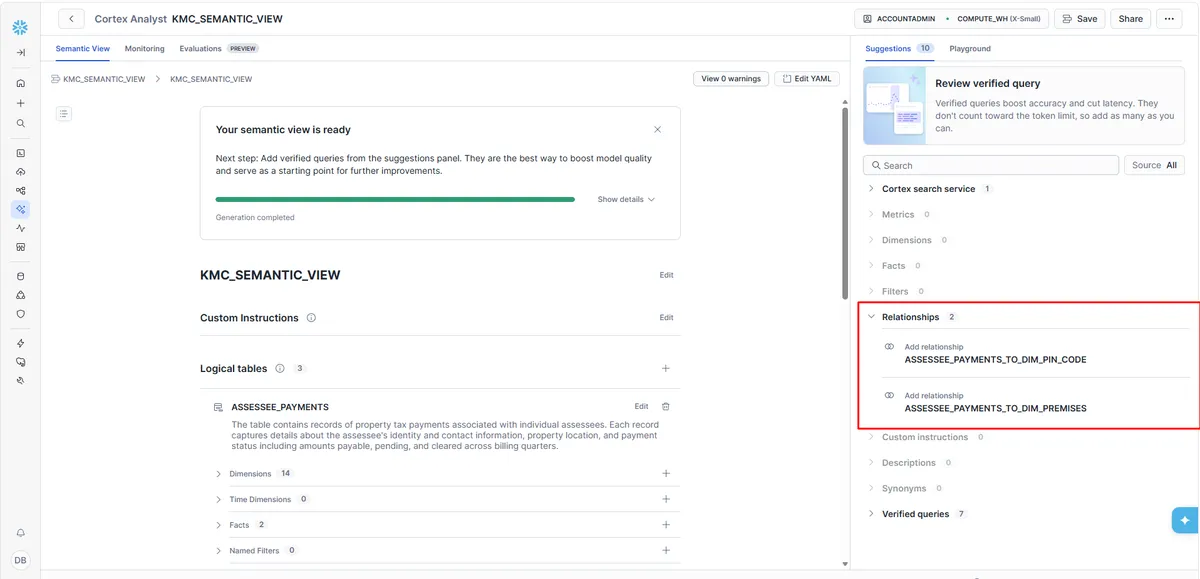

The snippet above provides the last stage of the Semantic View autopilot. It prods the user to select the necessary attributes to be processed to build the context. Once done, Cortex then builds the YAML internally and then presents a review layer. The best part is - it is also able to automatically figure out the relationships between the different dimensions and fact tables! They need to be verified - and once you provide that confirmation, it is also logged into the consolidated semantic view. There is also a feature to add trusted queries. This ensures higher reliability on common query patterns that the developer can pre-build into the semantic layer, which in turn reduces output distortions of the physical SQL generated.

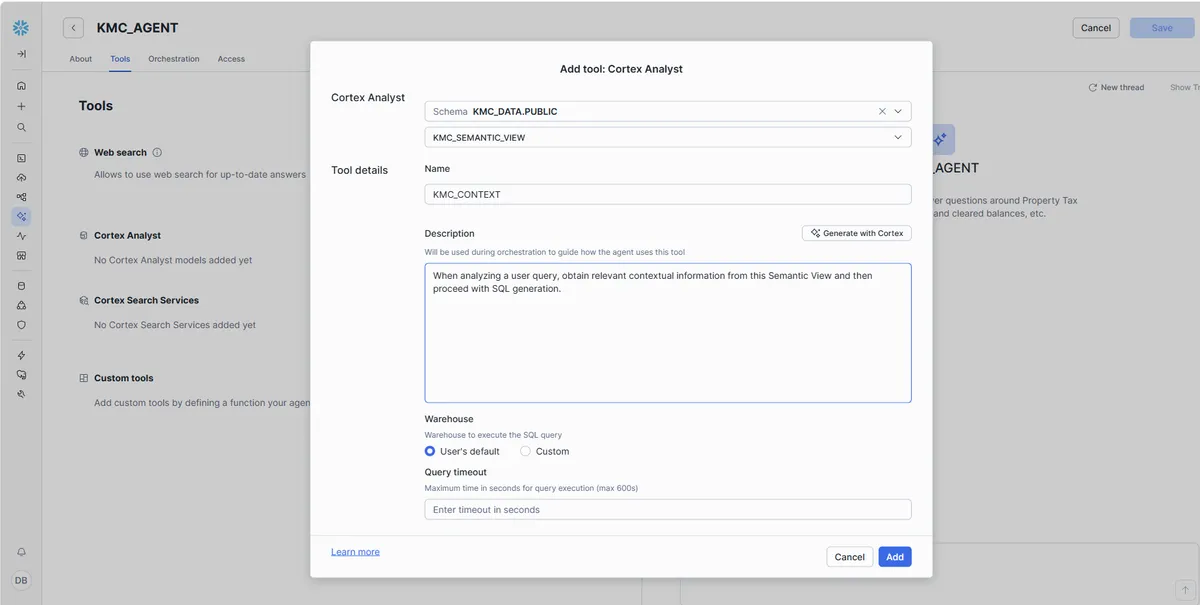

Once the Cortex Analyst is ready - and to keep things simple, we create only one such 'analyst' - the next step is to setup the master domain agent, as elucidated in the outline structure earlier. Agents are nothing but a brain operating with a set of 'tools' - in Snowflake's case, tools can rely on the semantic layer, or custom tools like web searches. Let's add our KMC_SEMANTIC_VIEW analyst as a tool in the KMC_AGENT object.

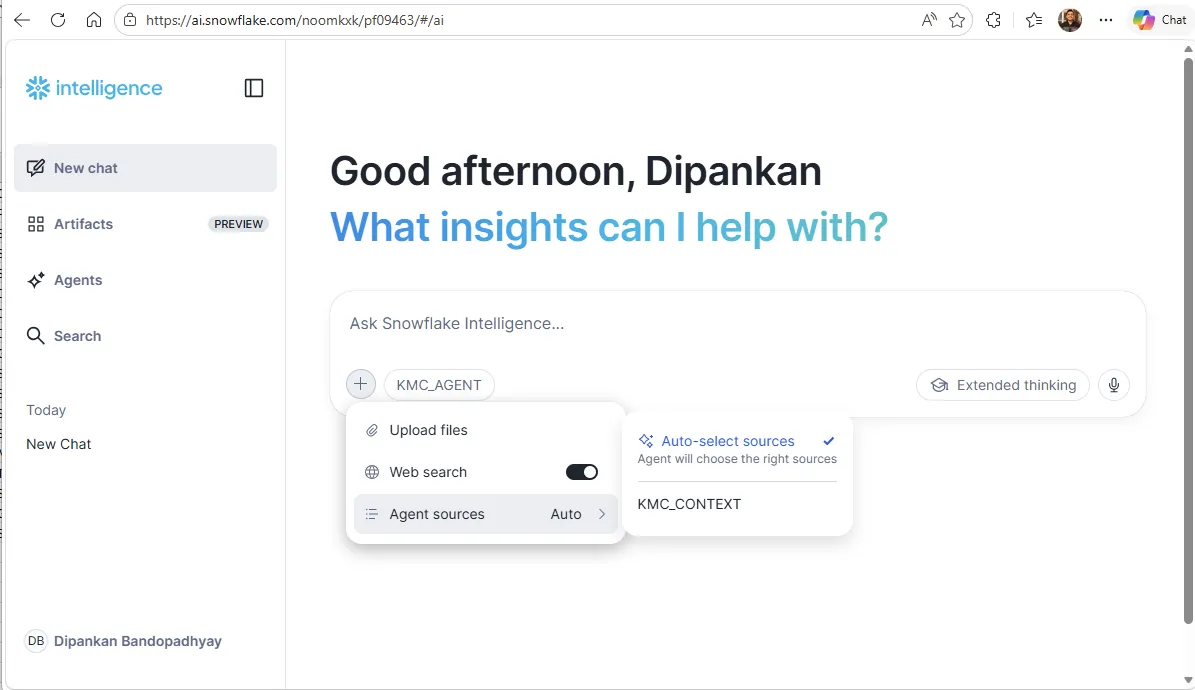

Once done, the agent then can be accessed from the Snowflake Intelligence utility. The Snowflake Intelligence is a dedicated portal which can be customized as per the organizational branding, allowing access for business/curious minds to query data using the semantic context available to Snowflake already from the previous stages.

Playing around

Snowflake's Trial Accounts limit conversational abilities with Snowflake Intelligence. Duh, but understandable. Therefore, I headed over to the Cortex Playground to test the effectiveness of the semantic layer we built earlier.

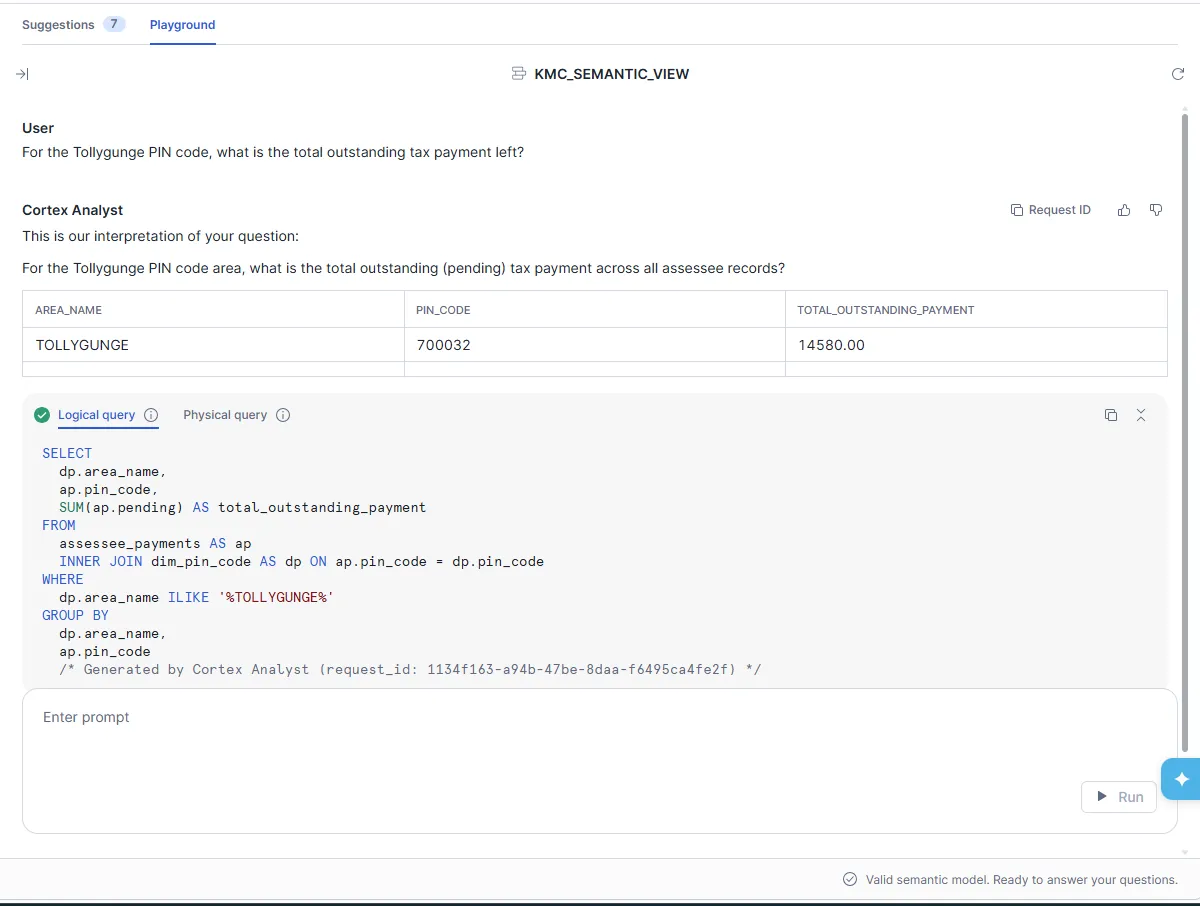

My first question was:

For the Tollygunge area, what is the total outstanding payment left?

To answer this question, the agent has to figure out the PIN Code from the relevant dimension table for the Tollygunge area, filter the dataset with that PIN Code, and then compute the sum total for the PENDING column. To my surprise, it one-shotted the question!

To test further - I put one more question in.

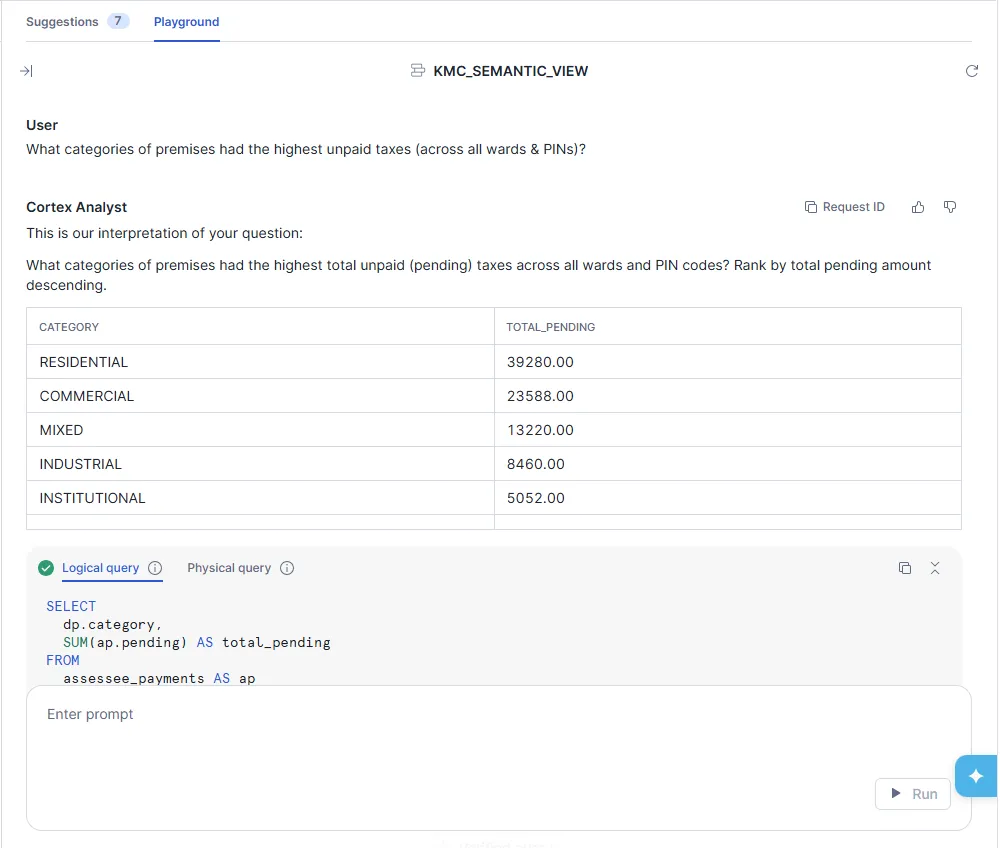

What categories of premises had the highest unpaid taxes (across all wards & PINs)?

The agent was also able to handle it comfortably. Here's the output:

Reflections and Next Steps

This is obviously a significant advancement. With Snowflake having simplified the infrastructure part, the context-building exercise then becomes a one-time effort (assuming that data dimensions and relationships do not change rapidly). From a security perspective, Snowflake also executes data respecting Role Based Access Control (RBAC) privileges, so only people who have access to otherwise query a table can get back information from the agent.

Snowflake Intelligence seems to be quite promising, bridging the gap between theoretical setups and a readily available data platform to build agents on top of. If your organization is using Snowflake - but has chosen a very different path to semantic modelling - drop me a note!