Real Time Data Quality: Architecting for Instant Trust

Data Quality is an evergreen subject that finds its place in most corporate proposals. While businesses regularly evaluate opportunities to optimize revenue leakages within their different lines, they forget that the Cost of Poor Data Quality (CoPDQ) is often an invisible Damocles' Sword, and pivotal decisions based on incorrect data can even lead to bankruptcy. How then, do companies address Data Quality issues sustainably, and at scale?

My own experiences with data engineering across two large, listed concerns have led me to believe that organizations overwhelmingly rely on the reactive DQ approach. Meaning, bad data is allowed to creep into source systems over time - with the assumption that they will be fixed in some downstream layer, at some point in time. This setup usually involves BI modules to 'detect' bad data, and defective records are subsequently exposed to relevant teams/specialized MDM software for cleanup activity. Here's the attractive part: it is relatively easier to implement this solution design! However, what this does not account for is the need to continually cleanse 'bad data', often involving manual efforts and Full-Time employee (FTE) hours.

The other way is to design a system that addresses the problem at its roots: source applications. In enterprise systems, source systems involve applications such as SAP (it's everywhere), Salesforce, proprietary systems, et cetera. However, data quality is often a dynamic subject matter - what is of 'poor quality' today can be deemed as acceptable tomorrow. Therefore, a strict embedding of DQ policies within the source applications, beyond the bare minimums, is a tricky proposition. Simple DQ policy upgrades would need application upgrades, leading to downtimes and a management nightmare.

Introducing Data Quality as a Service

Enter Data Quality-as-a-Service (DQaaS)! DQaaS is effectively a decoupled architecture to stop semantically incorrect data (those in violation of existing business rules) from being put into the source systems. The idea is simple: when a source application has a data entry event, an external validation system (DQaaS provider) reads the event and fires a response back to affirm if the record being input is valid or not. This way, you can have multiple source applications producing their own, modular events - and then listening for validation events to commit the record to their backend application databases.

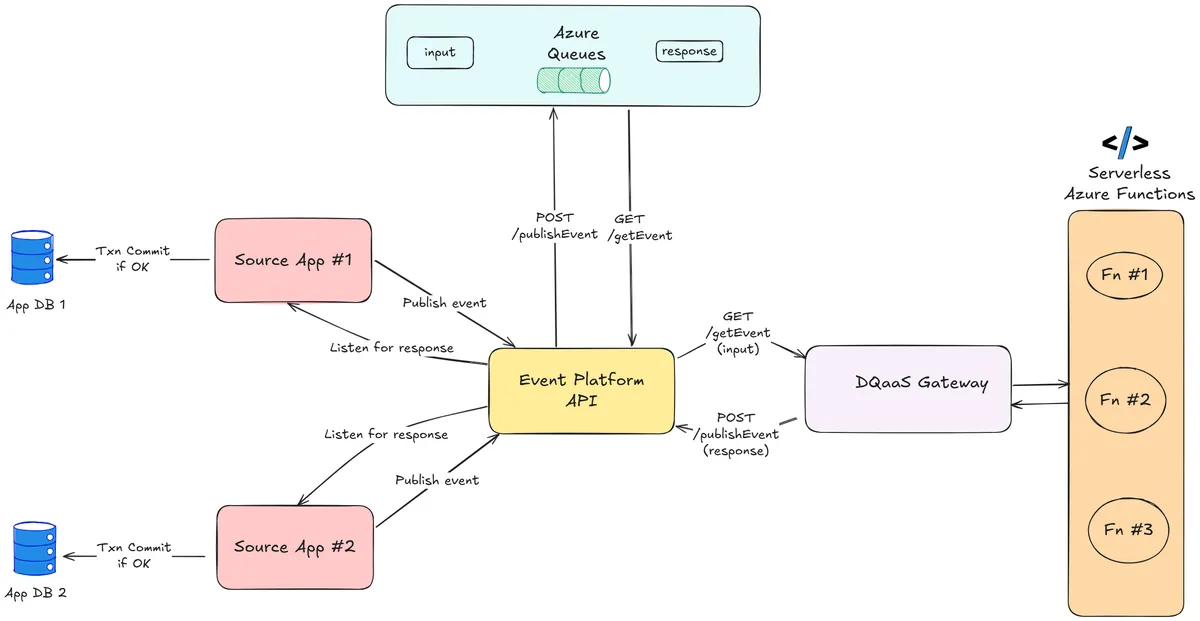

The communication happens over a message highway - typically a Service Bus - but through a standardized event platform API that abstracts the connections & transactions involving the service bus. An architecture diagram is provided for reference.

There are several components in the design. They are as follows:

- Source Application (SAP/Salesforce/etc)

- Event Platform API (Standardized API)

- Backend Streaming/Message Queue service (Azure Service Bus/Queue)

- DQaaS Gateway listener

- Microservices Catalog (Azure Functions)

Solving a Real World Problem through DQaaS

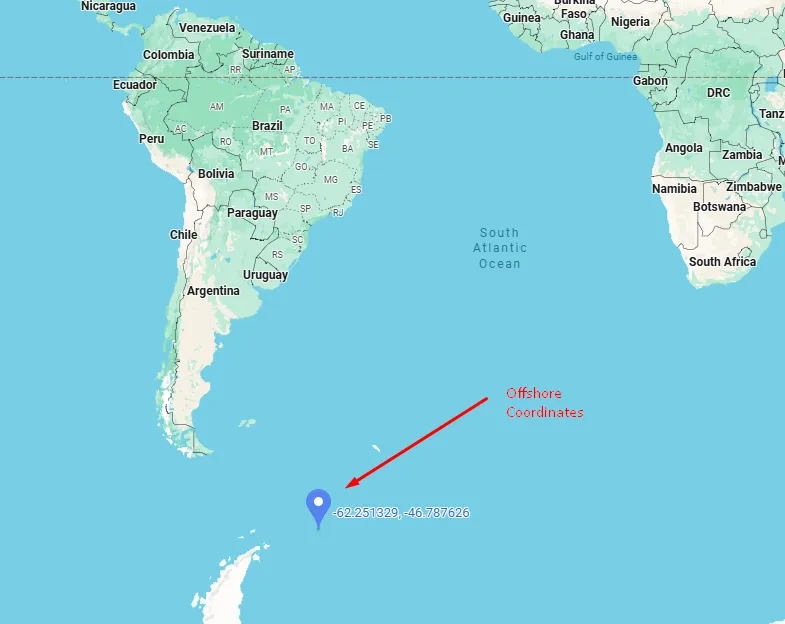

Let us assume that we are looking at some asset masterdata involving geospatial information. The problem statement is this:

Given that the user inputs three values - latitude, longitude, and whether the asset is Onshore/Offshore, can the input be validated? In other words, are the coordinates (lat, long) corresponding to an Onshore location or an Offshore position, as per the entry?

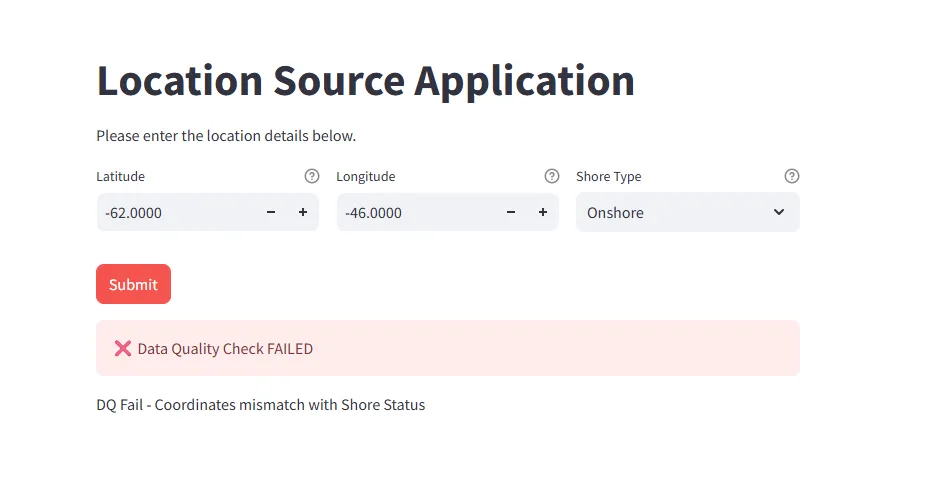

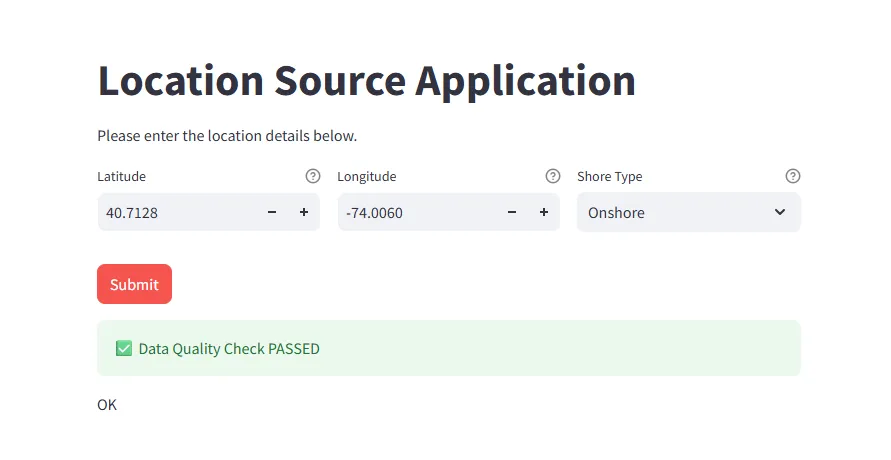

We will take an arbitrary coordinate - that I've verified as a point in the middle of nowhere (read offshore). Very fancy place - close to the Antarctic!

We will break down the implementation into chunks, as per the necessary components listed above for the decoupled system to work.

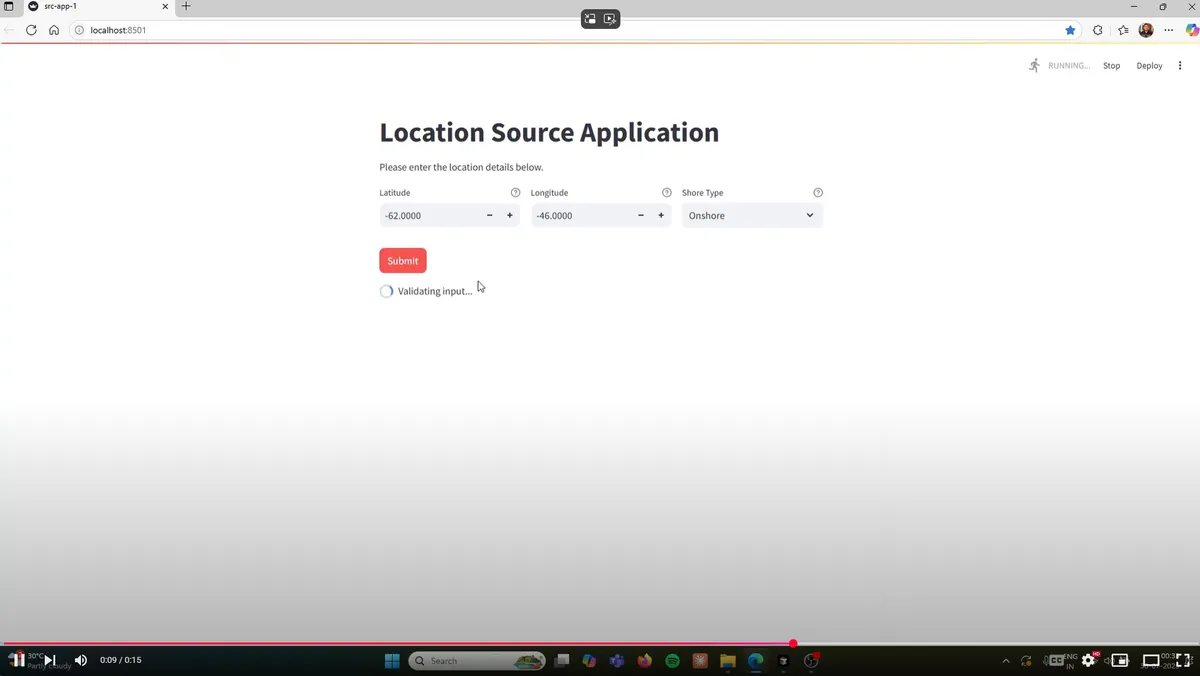

Source Application

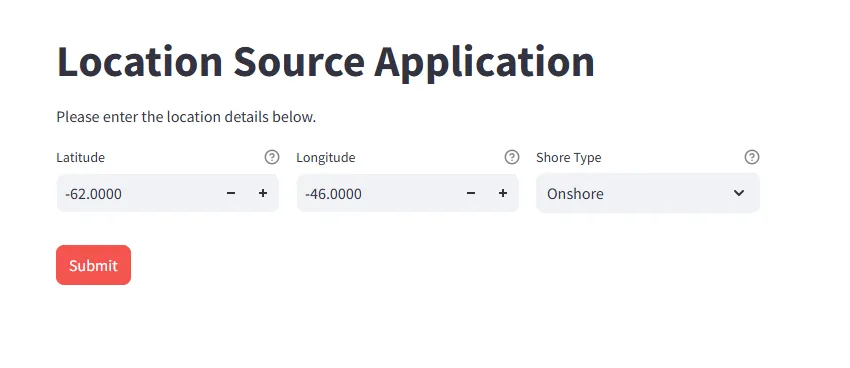

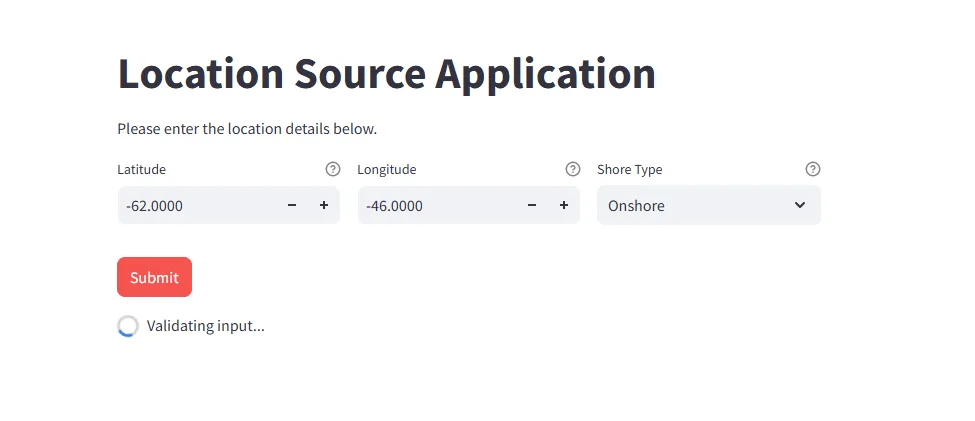

Let's create our mock 'source application'. All the module does is to input the values described above, and send it to the Event API, before waiting for a confirmatory response from the DQ system. For purposes of simplicity, we will leverage Streamlit - a Python based webapp development framework. This is how the application looks when initiated.

Note that the "Shore" is chosen as Onshore- blasphemously inaccurate! We will submit this and see what happens. On clicking submit, the validation event is triggered. This means that it is time to explore the next components - the Event Platform API & the Message Queue.

Event Platform API & Event Queue

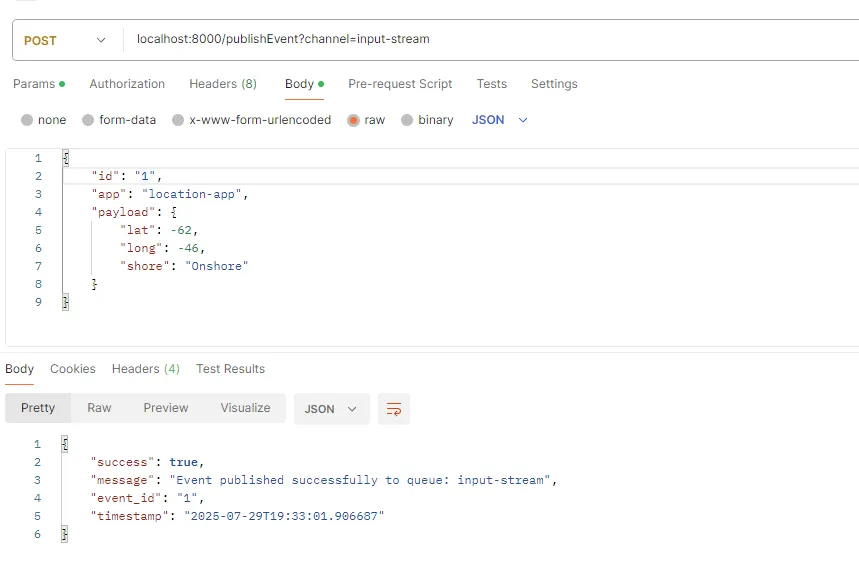

The event platform API is the backbone of the system, as it is the central system that handles messages from source systems, communicates with the Event Queue and also the DQaaS Gateway. It has two endpoints. The /publishEvent endpoint is a POST method, where an event to be sent to the Event Queue is transmitted. In case an event (or all) needs to be retrieved, we use a /getEvent GET endpoint.

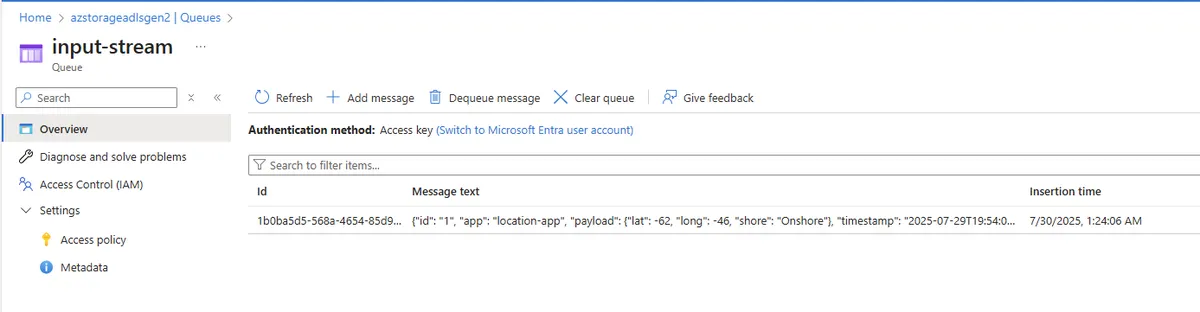

For our Proof-of-Concept work, I've chosen Azure Queues as the event message channel. For production applications, a Service Bus or a Streaming solution (such as Kafka/Azure Event Hubs) is used widely. The Azure Queues has two channels: an input-stream and a response-stream. The input-stream stores all input events fired from source applications. The response-stream keeps a record of all responses from the DQaaS system.

From where we left off on the source application - the submission fires an event to the Platform API. Behind the scenes, this is how the API call works:

The Azure Queue input-stream now has an event logged, as can be verified from the portal.

DQaaS Gateway Listener & Microservices Catalog

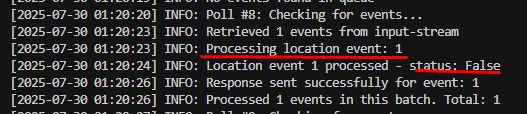

The DQaaS Gateway is a standardized interface that polls the message queue (the input-stream) for active events. If it finds an event from a source application, it parses the field parameters and redirects it to the underlying microservice to deal with the validation job. This gateway, too, leverages the Event Platform APIs. Note that this is an independent service to be deployed on a compute engine. The polling interval for the DQaaS Gateway decides the E2E latency for the entire system.

Given that we have an event now logged into the input-stream queue, the Gateway Listener detects and picks it up:

You can notice how the gateway picks up the event, and responds with a status - False, meaning that the validation has failed. What's going on?

As explained, under the hood, the gateway is a redirection service that transfers the payload for validation to the appropriate microservice in a wider catalog. It essentially does a lookup job to match the incoming event to the underlying microservice addressing the DQ validation, and then sends back a response with the original message ID to the response-stream. In our case, the microservice catalog is Azure Functions. This is called a microservice as it is ONLY responsible to provide a response for an extremely specialized check - to validate if the coordinates are onshore/offshore.

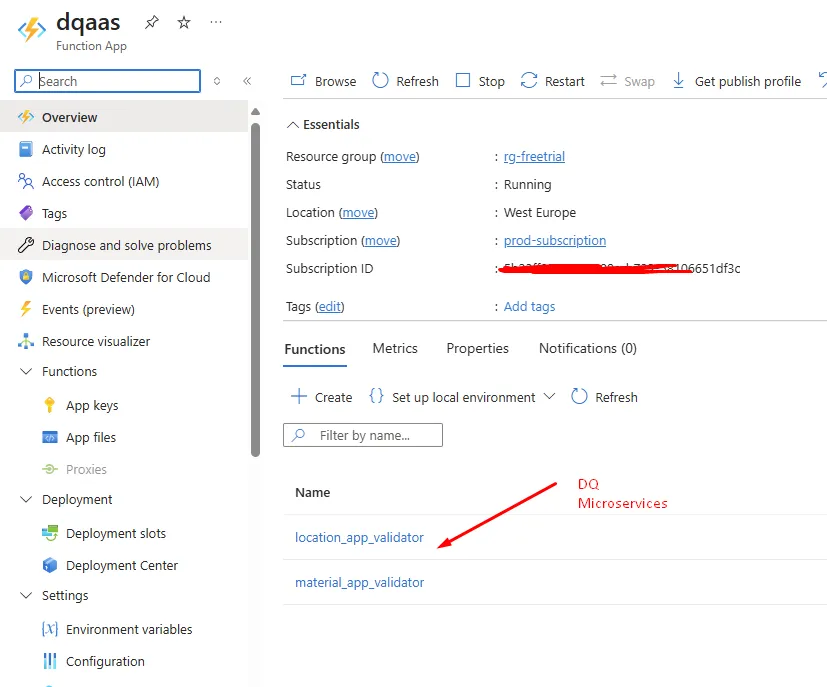

Given below is a catalog representation. You can see that there are two validation microservices already available.

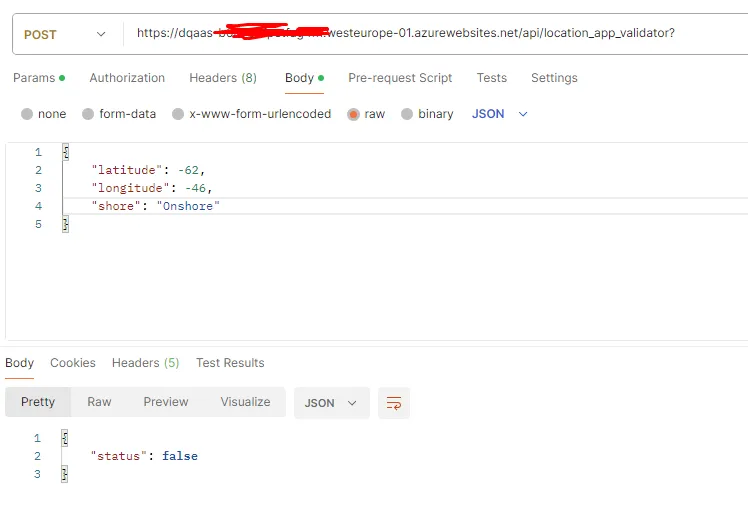

In this case, we are using the location-app, so the location_app_validator is triggered:

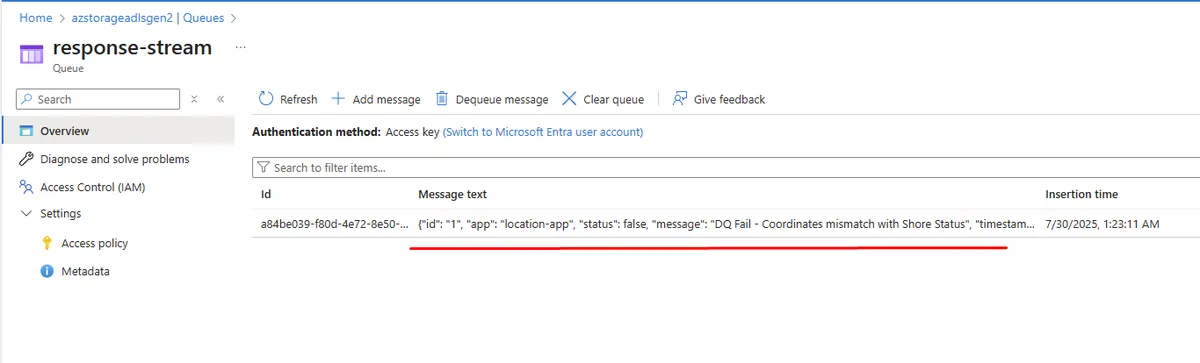

The response is False - meaning that the DQ criteria is not met. If you remember, this is what the gateway has responded with. We can verify this in the Azure Queue response-stream as well.

Response Handling in the Source Application

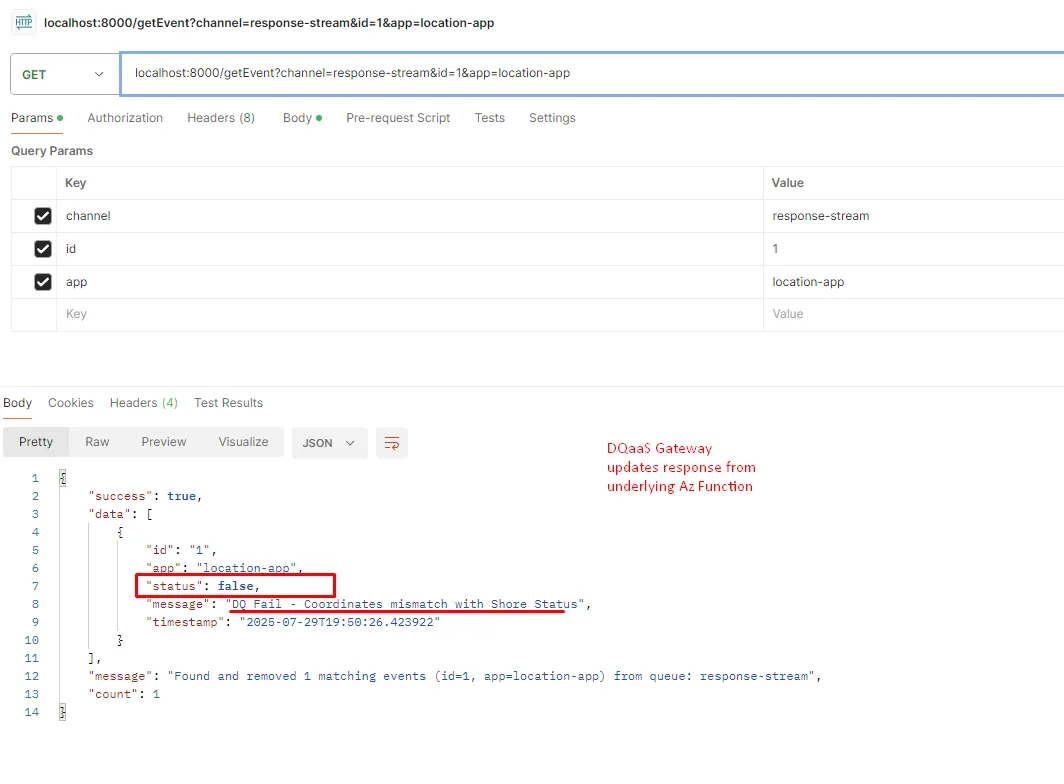

Once the event is created from the source application, it starts expecting a response from the validation service into the response-stream. It also keeps polling the GET /getEvent endpoint to poll the response-stream channel for the message ID that was just generated.

The Event Platform API de-lists these events once they are consumed to keep the queue congestion-free. As the response is now obtained, the Source Application can now reject the data entered, and provide an explanatory message for the user to fix the data at source!

In the alternate event that there is a valid data entered, the system correctly approves it. The data is committed to the application database, only if it is validated.

We have built an elementary - but working - DQaaS system!

Here is a link to the entire input experience - through screen capture.

Takeaways and Considerations

The DQaaS approach challenges the status quo which believes that Data Quality is easier to address retrospectively. With a one-time effort investment into building the setup right, and subsequent maintenance of the DQ Microservices catalog, the data is first-time right. A key benefit of the approach is that specialised teams keep to their expertise in a decoupled architecture like this. The source system teams only have to integrate an API to trigger an event and listen to the response, while the complex validation logic are kept abstracted behind the DQ Gateway, defined by those who know the validation rules best.

The solution design proposed is also cost-effective, as it is entirely serverless and is event-driven by design. One could replace the underlying stack from an Azure-base to any other cloud provider, but the design would remain more or less consistent.